Trust in Virtual Reality-based Intelligent Agent Advisors

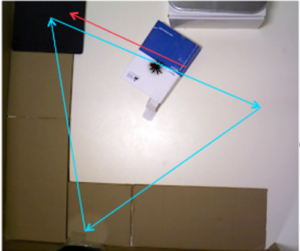

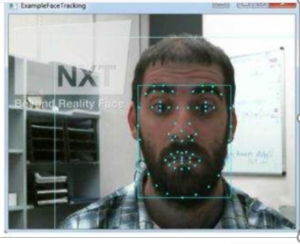

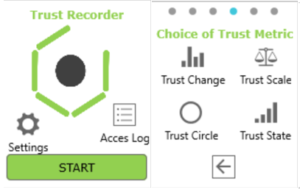

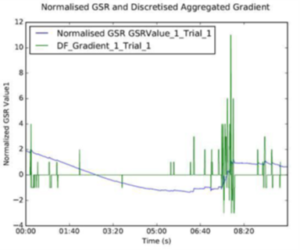

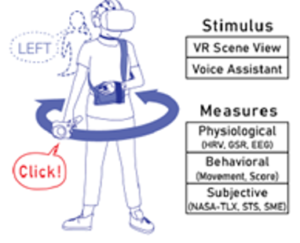

In this project we duplicated a Triesman & Gelade (1980) visual conjunction search, within a 3d Virtual Reality environment, using the HTC Vive lab of CRP member Mark Billinghurst, University of South Australia. Verbal directional cues (‘left’, ‘right’) were provided by a Virtual Agent at baseline (no cue), 50%, and 100% accuracy, and EEG, HRV, GSR, Subjective Metrics, and a novel behavioural measure of trust were collected. The behavioural metric was congruency/incongruency between the advised direction and subject’s actual head movement. This work is published at the 2019 VRST conference, and followed up with a journal publication in 2020.